[[{“value”:”

As organizations scale their use of generative AI, many workloads require cost-efficient, bulk processing rather than real-time responses. Amazon Bedrock batch inference addresses this need by enabling large datasets to be processed in bulk with predictable performance—at 50% lower cost than on-demand inference. This makes it ideal for tasks such as historical data analysis, large-scale text summarization, and background processing workloads.

In this post, we explore how to monitor and manage Amazon Bedrock batch inference jobs using Amazon CloudWatch metrics, alarms, and dashboards to optimize performance, cost, and operational efficiency.

New features in Amazon Bedrock batch inference

Batch inference in Amazon Bedrock is constantly evolving, and recent updates bring significant enhancements to performance, flexibility, and cost transparency:

- Expanded model support – Batch inference now supports additional model families, including Anthropic’s Claude Sonnet 4 and OpenAI OSS models. For the most up-to-date list, refer to Supported Regions and models for batch inference.

- Performance enhancements – Batch inference optimizations on newer Anthropic Claude and OpenAI GPT OSS models now deliver higher batch throughput as compared to previous models, helping you process large workloads more quickly.

- Job monitoring capabilities – You can now track how your submitted batch jobs are progressing directly in CloudWatch, without the heavy lifting of building custom monitoring solutions. This capability provides AWS account-level visibility into job progress, making it straightforward to manage large-scale workloads.

Use cases for batch inference

AWS recommends using batch inference in the following use cases:

- Jobs are not time-sensitive and can tolerate minutes to hours of delay

- Processing is periodic, such as daily or weekly summarization of large datasets (news, reports, transcripts)

- Bulk or historical data needs to be analyzed, such as archives of call center transcripts, emails, or chat logs

- Knowledge bases need enrichment, including generating embeddings, summaries, tags, or translations at scale

- Content requires large-scale transformation, such as classification, sentiment analysis, or converting unstructured text into structured outputs

- Experimentation or evaluation is needed, for example testing prompt variations or generating synthetic datasets

- Compliance and risk checks must be run on historical content for sensitive data detection or governance

Launch an Amazon Bedrock batch inference job

You can start a batch inference job in Amazon Bedrock using the AWS Management Console, AWS SDKs, or AWS Command Line Interface (AWS CLI). For detailed instructions, see Create a batch inference job.

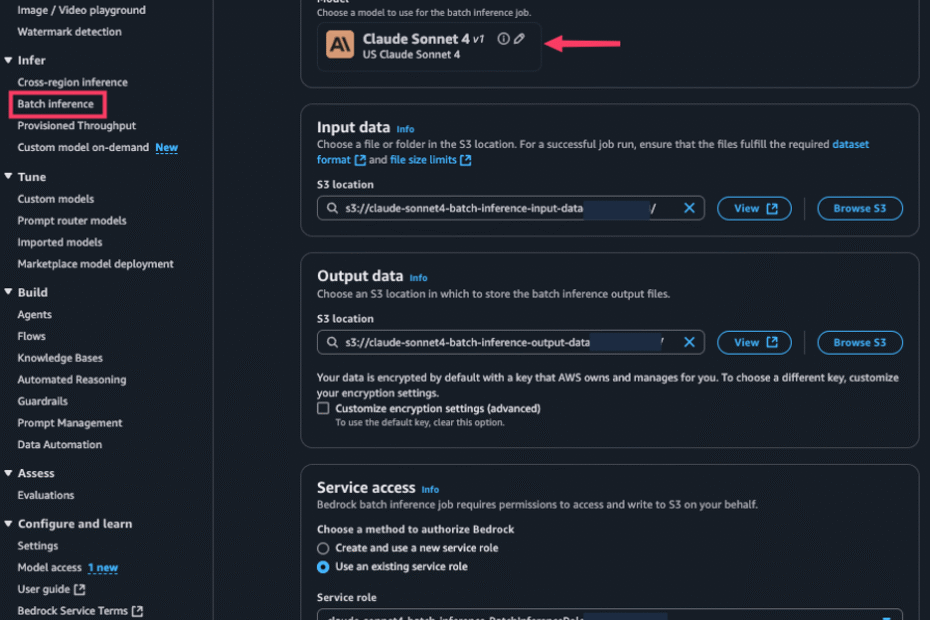

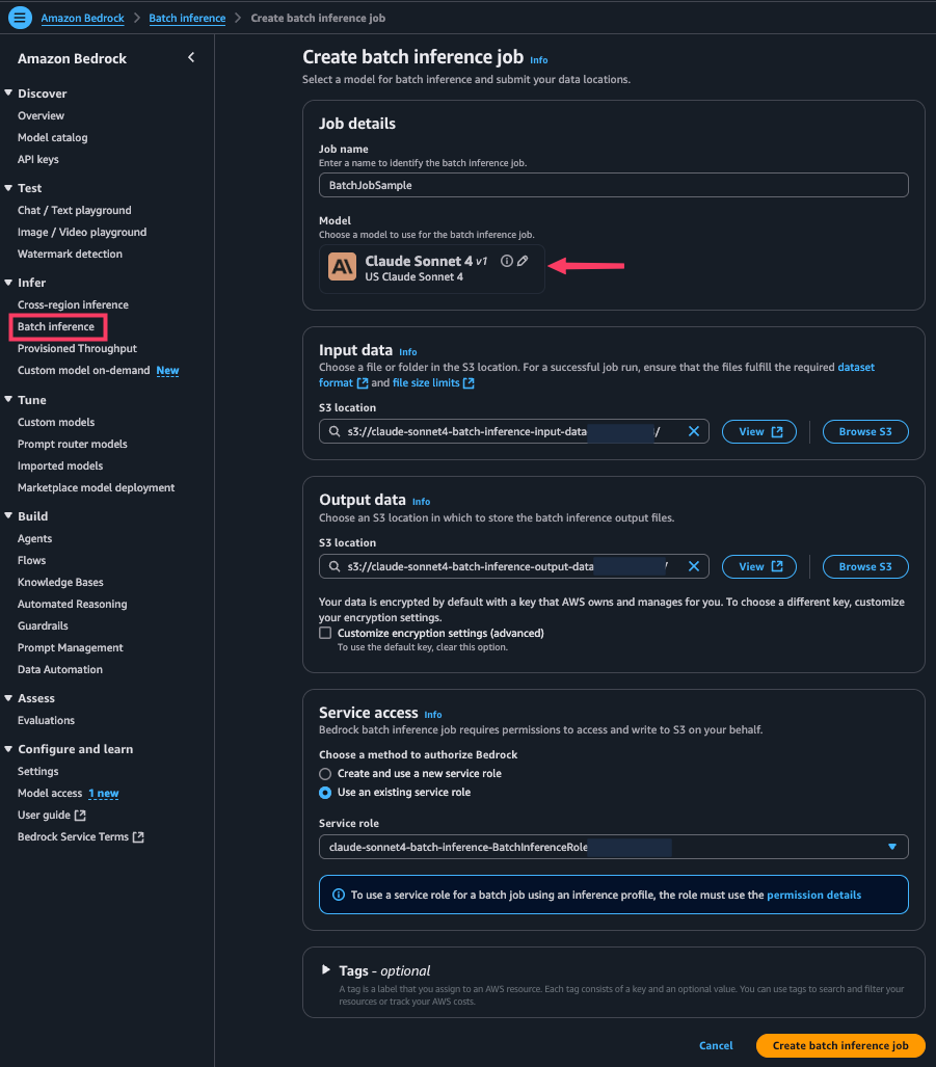

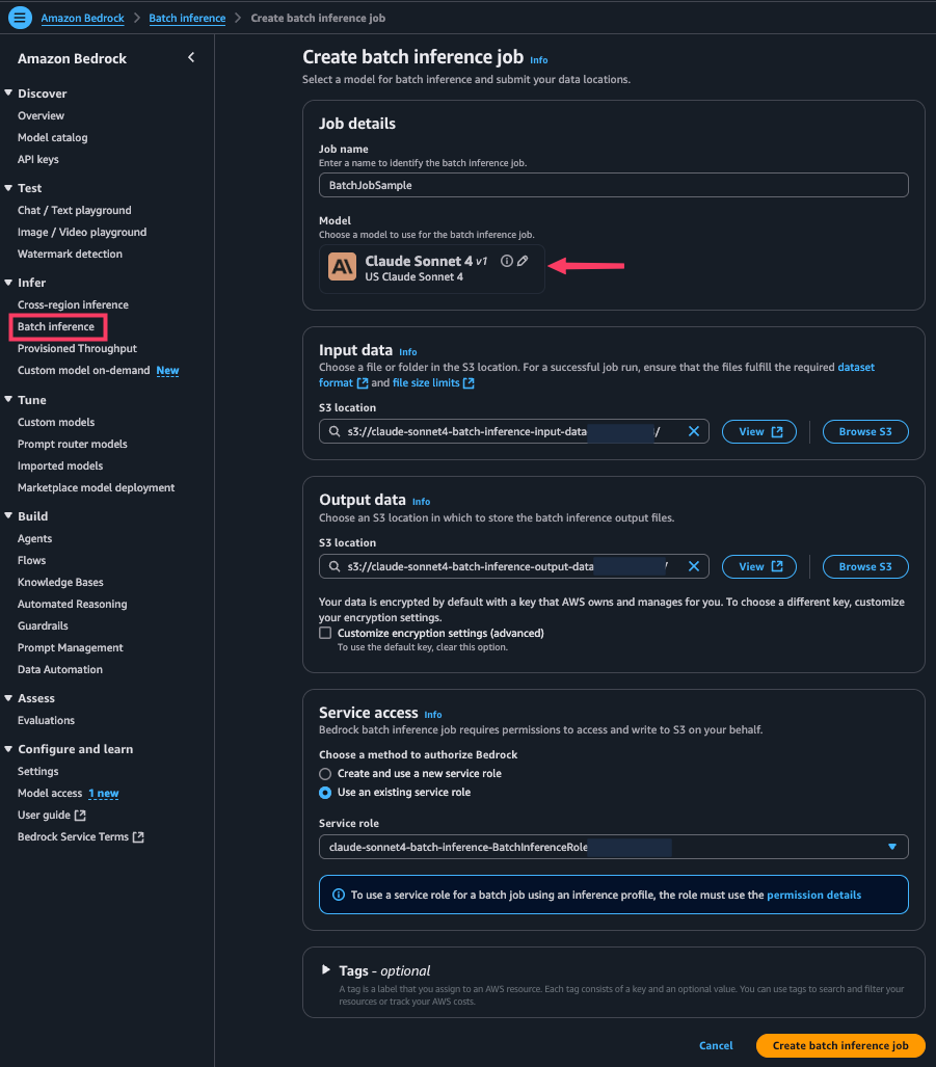

To use the console, complete the following steps:

- On the Amazon Bedrock console, choose Batch inference under Infer in the navigation pane.

- Choose Create batch inference job.

- For Job name, enter a name for your job.

- For Model, choose the model to use.

- For Input data, enter the location of the Amazon Simple Storage Service (Amazon S3) input bucket (JSONL format).

- For Output data, enter the S3 location of the output bucket.

- For Service access, select your method to authorize Amazon Bedrock.

- Choose Create batch inference job.

Monitor batch inference with CloudWatch metrics

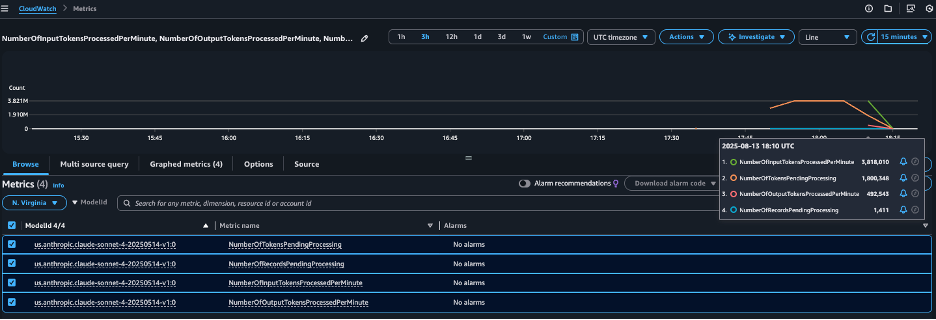

Amazon Bedrock now automatically publishes metrics for batch inference jobs under the AWS/Bedrock/Batch namespace. You can track batch workload progress at the AWS account level with the following CloudWatch metrics. For current Amazon Bedrock models, these metrics include records pending processing, input and output tokens processed per minute, and for Anthropic Claude models, they also include tokens pending processing.

The following metrics can be monitored by modelId:

- NumberOfTokensPendingProcessing – Shows how many tokens are still waiting to be processed, helping you gauge backlog size

- NumberOfRecordsPendingProcessing – Tracks how many inference requests remain in the queue, giving visibility into job progress

- NumberOfInputTokensProcessedPerMinute – Measures how quickly input tokens are being consumed, indicating overall processing throughput

- NumberOfOutputTokensProcessedPerMinute – Measures generation speed

To view these metrics using the CloudWatch console, complete the following steps:

- On the CloudWatch console, choose Metrics in the navigation pane.

- Filter metrics by AWS/Bedrock/Batch.

- Select your

modelIdto view detailed metrics for your batch job.

To learn more about how to use CloudWatch to monitor metrics, refer to Query your CloudWatch metrics with CloudWatch Metrics Insights.

Best practices for monitoring and managing batch inference

Consider the following best practices for monitoring and managing your batch inference jobs:

- Cost monitoring and optimization – By monitoring token throughput metrics (

NumberOfInputTokensProcessedPerMinuteandNumberOfOutputTokensProcessedPerMinute) alongside your batch job schedules, you can estimate inference costs using information on the Amazon Bedrock pricing page. This helps you understand how fast tokens are being processed, what that means for cost, and how to adjust job size or scheduling to stay within budget while still meeting throughput needs. - SLA and performance tracking – The

NumberOfTokensPendingProcessingmetric is useful for understanding your batch backlog size and tracking overall job progress, but it should not be relied on to predict job completion times because they might vary depending on overall inference traffic to Amazon Bedrock. To understand batch processing speed, we recommend monitoring throughput metrics (NumberOfInputTokensProcessedPerMinuteandNumberOfOutputTokensProcessedPerMinute) instead. If these throughput rates fall significantly below your expected baseline, you can configure automated alerts to trigger remediation steps—for example, shifting some jobs to on-demand processing to meet your expected timelines. - Job completion tracking – When the metric

NumberOfRecordsPendingProcessingreaches zero, it indicates that all running batch inference jobs are complete. You can use this signal to trigger stakeholder notifications or start downstream workflows.

Example of CloudWatch metrics

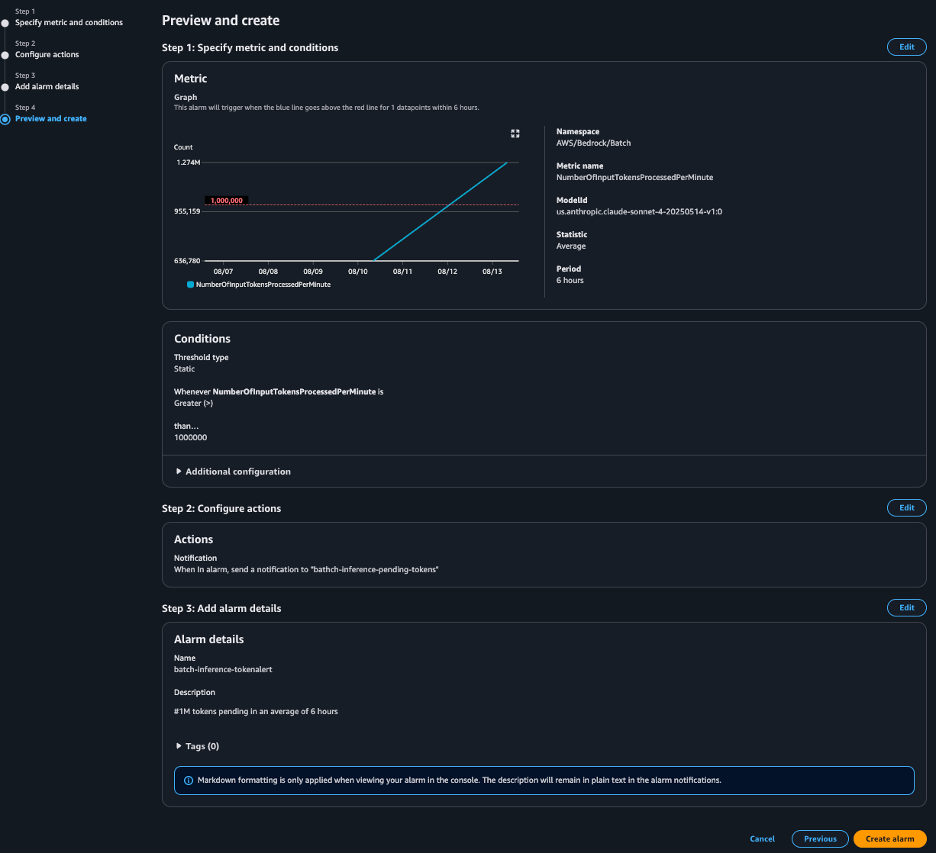

In this section, we demonstrate how you can use CloudWatch metrics to set up proactive alerts and automation.

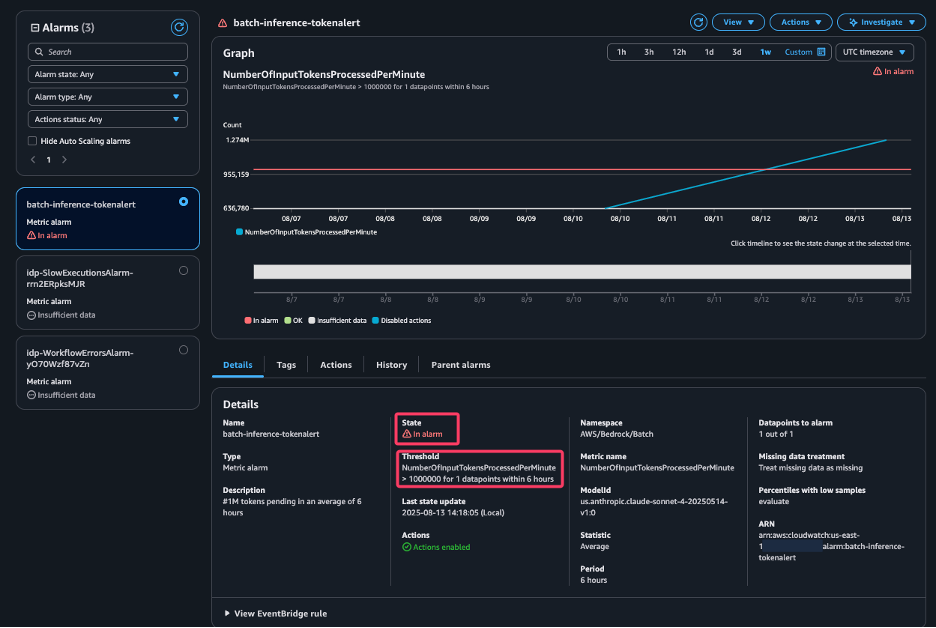

For example, you can create a CloudWatch alarm that sends an Amazon Simple Notification Service (Amazon SNS) notification when the average NumberOfInputTokensProcessedPerMinute exceeds 1 million within a 6-hour period. This alert could prompt an Ops team review or trigger downstream data pipelines.

The following screenshot shows that the alert has In alarm status because the batch inference job met the threshold. The alarm will trigger the target action, in our case an SNS notification email to the Ops team.

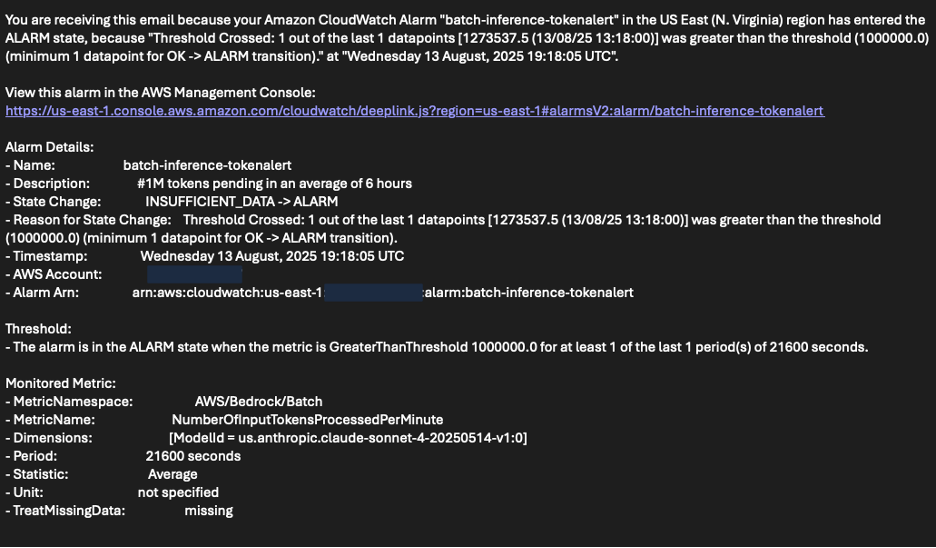

The following screenshot shows an example of the email the Ops team received, notifying them that the number of processed tokens exceeded their threshold.

You can also build a CloudWatch dashboard displaying the relevant metrics. This is ideal for centralized operational monitoring and troubleshooting.

Conclusion

Amazon Bedrock batch inference now offers expanded model support, improved performance, deeper visibility into the progress of your batch workloads, and enhanced cost monitoring.

Get started today by launching an Amazon Bedrock batch inference job, setting up CloudWatch alarms, and building a monitoring dashboard, so you can maximize efficiency and value from your generative AI workloads.

About the authors

Vamsi Thilak Gudi is a Solutions Architect at Amazon Web Services (AWS) in Austin, Texas, helping Public Sector customers build effective cloud solutions. He brings diverse technical experience to show customers what’s possible with AWS technologies. He actively contributes to the AWS Technical Field Community for Generative AI.

Vamsi Thilak Gudi is a Solutions Architect at Amazon Web Services (AWS) in Austin, Texas, helping Public Sector customers build effective cloud solutions. He brings diverse technical experience to show customers what’s possible with AWS technologies. He actively contributes to the AWS Technical Field Community for Generative AI.

Yanyan Zhang is a Senior Generative AI Data Scientist at Amazon Web Services, where she has been working on cutting-edge AI/ML technologies as a Generative AI Specialist, helping customers use generative AI to achieve their desired outcomes. Yanyan graduated from Texas A&M University with a PhD in Electrical Engineering. Outside of work, she loves traveling, working out, and exploring new things.

Yanyan Zhang is a Senior Generative AI Data Scientist at Amazon Web Services, where she has been working on cutting-edge AI/ML technologies as a Generative AI Specialist, helping customers use generative AI to achieve their desired outcomes. Yanyan graduated from Texas A&M University with a PhD in Electrical Engineering. Outside of work, she loves traveling, working out, and exploring new things.

Avish Khosla is a software developer on Bedrock’s Batch Inference team, where the team build reliable, scalable systems to run large-scale inference workloads on generative AI models. he care about clean architecture and great docs. When he is not shipping code, he is on a badminton court or glued to a good cricket match.

Avish Khosla is a software developer on Bedrock’s Batch Inference team, where the team build reliable, scalable systems to run large-scale inference workloads on generative AI models. he care about clean architecture and great docs. When he is not shipping code, he is on a badminton court or glued to a good cricket match.

Chintan Vyas serves as a Principal Product Manager–Technical at Amazon Web Services (AWS), where he focuses on Amazon Bedrock services. With over a decade of experience in Software Engineering and Product Management, he specializes in building and scaling large-scale, secure, and high-performance Generative AI services. In his current role, he leads the enhancement of programmatic interfaces for Amazon Bedrock. Throughout his tenure at AWS, he has successfully driven Product Management initiatives across multiple strategic services, including Service Quotas, Resource Management, Tagging, Amazon Personalize, Amazon Bedrock, and more. Outside of work, Chintan is passionate about mentoring emerging Product Managers and enjoys exploring the scenic mountain ranges of the Pacific Northwest.

Chintan Vyas serves as a Principal Product Manager–Technical at Amazon Web Services (AWS), where he focuses on Amazon Bedrock services. With over a decade of experience in Software Engineering and Product Management, he specializes in building and scaling large-scale, secure, and high-performance Generative AI services. In his current role, he leads the enhancement of programmatic interfaces for Amazon Bedrock. Throughout his tenure at AWS, he has successfully driven Product Management initiatives across multiple strategic services, including Service Quotas, Resource Management, Tagging, Amazon Personalize, Amazon Bedrock, and more. Outside of work, Chintan is passionate about mentoring emerging Product Managers and enjoys exploring the scenic mountain ranges of the Pacific Northwest.

Mayank Parashar is a Software Development Manager for Amazon Bedrock services.

Mayank Parashar is a Software Development Manager for Amazon Bedrock services.

“}]] In this post, we explore how to monitor and manage Amazon Bedrock batch inference jobs using Amazon CloudWatch metrics, alarms, and dashboards to optimize performance, cost, and operational efficiency.  Read More Amazon Bedrock, Amazon CloudWatch, Amazon Machine Learning, Artificial Intelligence, Management Tools, AI/ML

Read More Amazon Bedrock, Amazon CloudWatch, Amazon Machine Learning, Artificial Intelligence, Management Tools, AI/ML